Hello Framework Friends, I decided to go in deep and see how different RAM configurations impact power consumption, and collected some data in regards to idle usage and video playback in and outside of different browsers. Results are just from my Batch 1 i7-1165G7 Laptop.

Highlighted findings/TLDR:

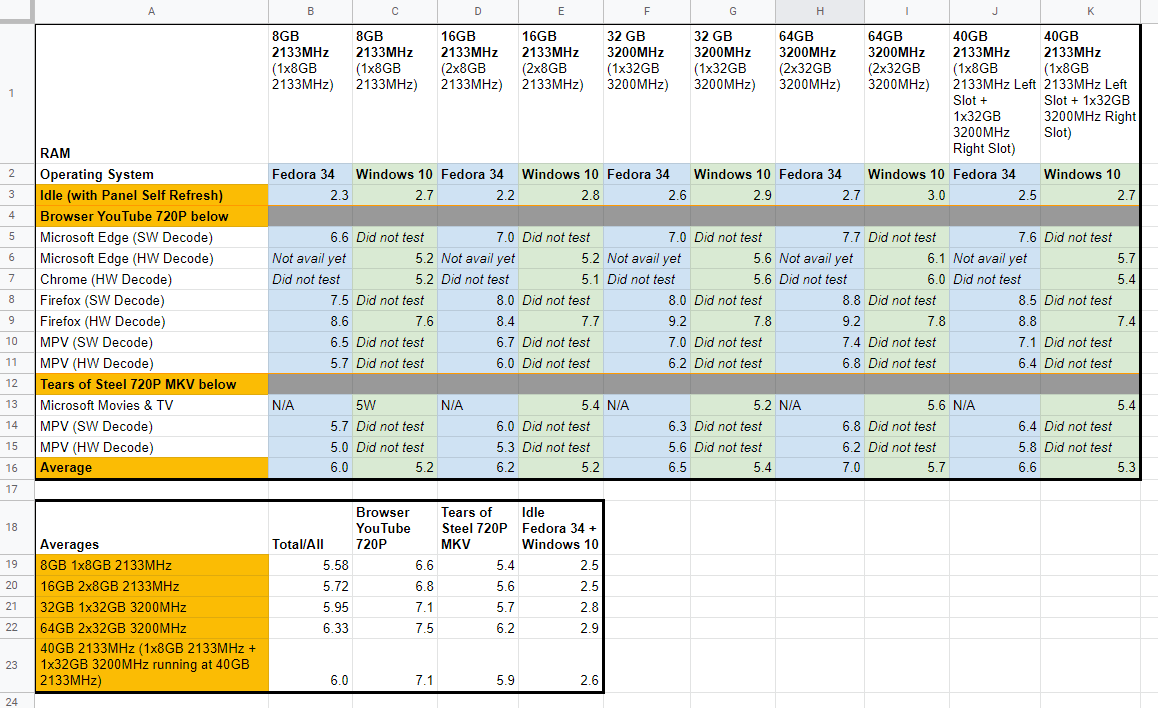

(discharge rate in watts)

| Averages | Total/All | Browser YouTube 720P | Tears of Steel 720P MKV | Idle Fedora 34 + Windows 10 |

|---|---|---|---|---|

| 8GB 1x8GB 2133MHz | 5.58 | 6.6 | 5.4 | 2.5 |

| 16GB 2x8GB 2133MHz | 5.72 | 6.8 | 5.6 | 2.5 |

| 32GB 1x32GB 3200MHz | 5.95 | 7.1 | 5.7 | 2.8 |

| 64GB 2x32GB 3200MHz | 6.33 | 7.5 | 6.2 | 2.9 |

| 40GB 2133MHz (1x8GB 2133MHz + 1x32GB 3200MHz running at 40GB 2133MHz) | 6.0 | 7.1 | 5.9 | 2.6 |

Image:

It appears in order of impact to power consumption (least to greatest):

- Using an extra ram slot:

- Seems negligible

- Size (e.g. 8gb vs 16gb):

- Probably dependent on specific running task

- Higher frequency

- Seems to have a significant impact. I assume this is because the Tiger Lake XE GPU can run faster due to the higher frequency. 2133MHz → 3200MHz bumps 3DMark’s Fire Strike ~2-300 points. Also, i7-1165G7’s GPU is faster than i5-1135G7, so I’m curious on i5-1135G7 numbers.

So I think the biggest culprit may be: the higher RAM frequency, which improves Tiger Lake XE GPU performance, may actually be causing video playback using HW decoding and general graphics…things? to consume more battery. Looks like if you’re trying squeeze the most battery, 3200MHz uses significantly more power than 2133MHz.

Compare in Browser YouTube 720P playback in Microsoft Edge (HW Decode) on Windows (shown in [1]):

1x8GB 2133MHz @ 5.2W vs. 2x32GB 3200MHz @ 6.1W

How can we possibly mitigate this?

- Ability to turn off a RAM slot (trading in dual to single channel performance loss for battery life), probably in the BIOS

- Ability to downclock RAM (trading in performance, GPU being a big factor for battery life), probably in the BIOS

Tangentially:

- Ability to downclock the GPU?

- Ability to undervolt (which, sigh, isn’t possible at the moment on Tiger Lake-U. Alas Intel, alas.)

Notes:

- These are just my findings at this point in time, who knows what will change in the future.

- Don’t pay attention too much attention to the Linux vs. Windows averages, as currently browser hardware encoding on Linux is very experimental/alpha/beta/buggy/wonky whatever one wants to call it.

- Firefox HW Decode at least on my Fedora install is wonky [2]

- These numbers are skewed towards idle times and video playback, meaning YMMV depending on your workflow. E.g. CPU/RAM intensive tasks may perform differently.

- I tried taking some average consumption when numbers stabilized, and erred on the lower side. 0.05W rounded up, so take that into account in margin of error.

[1] Full data:

Miscellaneous thoughts:

-

At this point I’ve opened the top panel probably at least 50 times, maybe closer to 100. The battery disconnect option in the BIOS is oh-so-awesome. I have System Setup (BIOS) in my GRUB bootloader. Enter BIOS, Battery Disconnect, lift top panel off (I keep all the bottom 5 screws undone), swap out RAM, set top panel back down to attach magnetically, and leave screws undone. So. Nice.

Edit: Clarification: I only kept the screws undone during testing and would not recommend them undone during regular use. See my reasoning here. -

Please ensure RAM’s inserted in all the way. Once I mistakenly didn’t pop it in all the way, sat at a black screen for a minute ("it’s just doing the RAM change detect sequence…wait this is taking longer than usual…wait…checks RAM…oh sh–…*pops RAM all the way in…sweats nervously…turns back on…whew, didn’t short anything

-

I’ve read that Tiger Lake is very efficient with hardware video decoding, and we can see that it’s pretty good on Windows (MS Edge and Chrome). HW Decoding in browsers on Linux seems…iffy, at the moment, anyways.

Methodology

SW = Software (software video decoding)

HW = Hardware (hardware video decoding)

Controls:

- CPU: i7-1165G7

- Memory kits used:

- 16GB (2x8GB), 2133MHz: SK Hynix (timings too lazy to look up/verify) 1.2V model: HMA41GS6AFR8N

- 64GB (2x32GB), 3200MHz: G.Skill RipJaws Series CL22-22-22-52 1.2V model: F4-3200C22D-64GRS

- A single USB-C expansion card plugged in, so no HDMI/SD card etc.

- 10% brightness

- 50-75% speaker volume

- Single stick RAM config tested in left slot

Linux Fedora (5.14.0 Vanilla Kernel for PSR fix, stability unknown)

- Using TLP with powersave governor

- Using intel-media-driver, not libva-intel-driver

- Microsoft Edge Beta Version 93.0.961.33

- Firefox version 91.0.2 (64-bit)

- Wayland Firefox:

MOZ_ENABLE_WAYLAND=1 MOZ_DISABLE_RDD_SANDBOX=1 firefox - Wayland Firefox seems to use less battery on Sway, e.g. YouTube 720P, no HW Decode: ~11W

- Wayland Firefox:

[2] Important: Firefox HW Decode at least on my Fedora install doesn’t work as expected:

Something seems wonky as hardware decoding consumes more power than software decoding.

- I did jump through some hoops

- There are open bugs

- Still seems beta-ish at the moment

So take that as you will. Confirmed HW/SW decoding with intel-gpu-top.

Windows 19042.1202

- Using battery saver profile

- Microsoft Edge 93.0.961.38 (64-bit)

- Chrome 93.0.4577.63 (64-bit)

- Firefox version 91.0.2 (64-bit)

- Microsoft Movies & TV 10.21061.1012.0

Idle Tests:

I just let the computer idle  to check that it successfully idles, the CPU should be in C9/C10 states mostly.

to check that it successfully idles, the CPU should be in C9/C10 states mostly.

I’ve found that anything that constantly changes what’s displayed on the screen, like a flashing alert, will prevent being in C9/C10 states and reaching low idle power consumption.

I’m assuming it’s due to how Panel Self Refresh (PSR) works, and needing it to reach C9/C10.

Browser YouTube Tests:

Done by selecting 720P, not auto, so quality doesn’t change mid-video.

The video is almost fullscreen like shown. Maximize window, YouTube in theater mode, like so:

I let videos play for a few minutes to let things stabilize, video to buffer, etc. Numbers are rough averages of what I notice, may spike when buffering more etc. I’ve noticed periodic slight increases in power consumption which I assume happens when the browser downloads more YouTube video.

Seems like the greater video on-screen size equates to more power consumption.

Can also probably assume some increase in power consumption playing higher than 720P.

To check hardware decoding in Chromium DevTools:

YouTube in mpv with youtube-dl VAAPI HW Decode

mpv --ytdl-format="bestvideo[height<=720][vcodec!=avc1]+bestaudio/best" [youtube-url-without-brackets]

mpv playing YouTube avc1 codec:

CTRL+H toggles hardware decoding on/off.

Confirm with intel_gpu_top that you’re actually hardware decoding. The Video section should show higher than 0%, like so: